(x_train, y_train),(x_test, y_test) = fashion_mnist.load_data() Import TensorFlow, datetime, and os: import tensorflow as tfĭownload the FashionMNIST dataset and scale it: fashion_mnist = tf._mnist # Load the TensorBoard notebook extension Also, pass -bind_all to %tensorboard to expose the port outside the container.

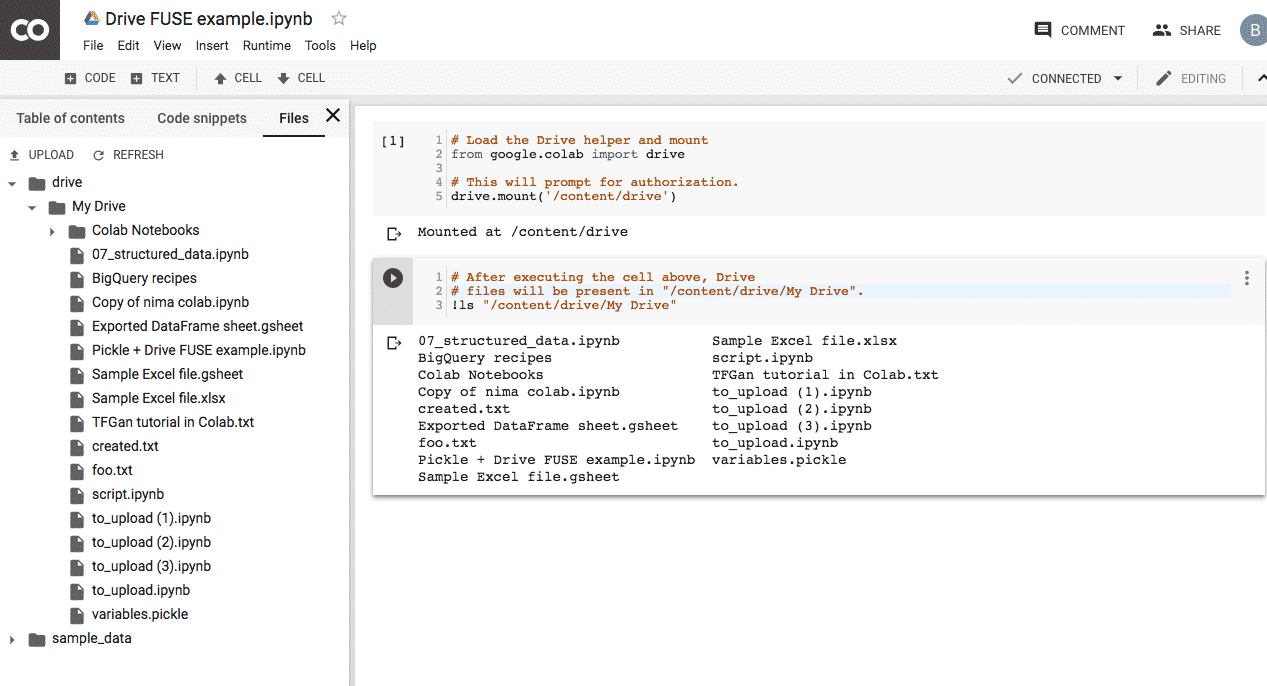

To have concurrent instances, it is necessary to allocate more ports. This will allocate a port for you to run one TensorBoard instance. Where the -p 6006 is the default port of TensorBoard. Thus, run the container with the following command: docker run -it -p 8888:8888 -p 6006:6006 \ The environment’s bin directory to PATH, as described here.įor Docker users: In case you are running a Docker image of Jupyter Notebook server using TensorFlow's nightly, it is necessary to expose not only the notebook's port, but the TensorBoard's port. One way to do this is to modify the kernel_spec to prepend The tensorboard binary is on your PATH inside the Jupyter notebookĬontext. More complicated setup, like a global Jupyter installation and kernelsįor different Conda/virtualenv environments, then you must ensure that The same virtualenv, then you should be good to go. Start by installing TF 2.0 and loading the TensorBoard notebook extension:įor Jupyter users: If you’ve installed Jupyter and TensorBoard into This can be helpful for sharing results, integrating TensorBoard into existing workflows, and using TensorBoard without installing anything locally. Half_eps = uncond_eps + guidance_scale * (cond_eps - uncond_eps)Įps = th.TensorBoard can be used directly within notebook experiences such as Colab and Jupyter. Model_out = model(combined, ts, **kwargs)Įps, rest = model_out, model_outĬond_eps, uncond_eps = th.split(eps, len(eps) // 2, dim=0) # Create a classifier-free guidance sampling function * batch_size + * batch_size, device=device

# Pack the tokens together into model kwargs. Uncond_tokens, uncond_mask = _tokens_and_mask( # Create the classifier-free guidance tokens (empty) # Create the text tokens to feed to the model. Is this related to the fact that they just do free guidance ( cond_fn=None) rather than clip guidance like in your colab? Also, I have tried to combine the last two, and results seems to be better, like if clip guidance, for the small model introduces too much randomness. thanks! I can see that the sampling part is slightly different than yours, adding the model_fn function to the sample loop. □ The black cat with white paws looks nice. I would be glad to test this and see the results, if they are better. I need to see the diff of what you did to understand better. (full_batch_size, 3, options, options),Īlso, I have tried to combine the last two, and results seems to be better, like if clip guidance, for the small model introduces too much randomness. Unless I am missing something, the model_fn function is added to the sample loop in both notebooks called text2im.ipynb. The notebook linked in my first post is the classifier-free guidance, with cond_fn=None, copied from: When cond_fn is not None, I assume you are looking at the CLIP-guided approach: Is this related to the fact that they just do free guidance ( cond_fn=None) rather than clip guidance like in your colab? I can see that the sampling part is slightly different than yours, adding the model_fn function to the sample loop.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed